This essay begins with a deceptively simple question: What work do images do in AI systems? What are computers meant to recognize in an image and what is misrecognized or even completely invisible? Next, we look at the method for introducing images into computer systems and look at how taxonomies order the foundational concepts that will become intelligible to a computer system. These assumptions inform the way AI systems work-and fail-to this day. By excavating the construction of these training sets and their underlying structures, many unquestioned assumptions are revealed. Methodologically, we could call this project an archeology of datasets: we have been digging through the material layers, cataloguing the principles and values by which something was constructed, and analyzing what normative patterns of life were assumed, supported, and reproduced.

We have looked at hundreds of collections of images used in artificial intelligence, from the first experiments with facial recognition in the early 1960s to contemporary training sets containing millions of images. Understanding the politics within AI systems matters more than ever, as they are quickly moving into the architecture of social institutions: deciding whom to interview for a job, which students are paying attention in class, which suspects to arrest, and much else.įor the last two years, we have been studying the underlying logic of how images are used to train AI systems to “see” the world. .jpg)

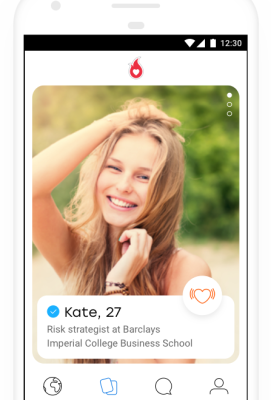

This led to the current moment, in which challenges such as object detection and facial recognition have been largely solved. This arc of inevitability recurs in many AI narratives, where it is assumed that ongoing technical improvements will resolve all problems and limitations.īut what if the opposite is true? What if the challenge of getting computers to “describe what they see” will always be a problem? In this essay, we will explore why the automated interpretation of images is an inherently social and political project, rather than a purely technical one. The story we’ve been told goes like this: brilliant men worked for decades on the problem of computer vision, proceeding in fits and starts, until the turn to probabilistic modeling and learning techniques in the 1990s accelerated progress. In 1966, Marvin Minsky was a young professor at MIT, making a name for himself in the emerging field of artificial intelligence. Deciding that the ability to interpret images was a core feature of intelligence, Minsky turned to an undergraduate student, Gerald Sussman, and asked him to “spend the summer linking a camera to a computer and getting the computer to describe what it saw.” This became the Summer Vision Project. Needless to say, the project of getting computers to “see” was much harder than anyone expected, and would take a lot longer than a single summer. There’s an urban legend about the early days of machine vision, the subfield of artificial intelligence (AI) concerned with teaching machines to detect and interpret images. Where did these images come from? Why were the people in the photos labeled this way? What sorts of politics are at work when pictures are paired with labels, and what are the implications when they are used to train technical systems? Things get strange: A photograph of a woman smiling in a bikini is labeled a “slattern, slut, slovenly woman, trollop.” A young man drinking beer is categorized as an “alcoholic, alky, dipsomaniac, boozer, lush, soaker, souse.” A child wearing sunglasses is classified as a “failure, loser, non-starter, unsuccessful person.” You’re looking at the “person” category in a dataset called ImageNet, one of the most widely used training sets for machine learning. But as you probe further into the dataset, people begin to appear: cheerleaders, scuba divers, welders, Boy Scouts, fire walkers, and flower girls. You’re met with thousands of images: apples and oranges, birds, dogs, horses, mountains, clouds, houses, and street signs. You open up a database of pictures used to train artificial intelligence systems.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed